Request: GET /rd/TdcfliKN0j9dT-bIMpo-GynUNR63kfnDsJn_YOP8uurTmlvy7C3oKnJtb1Mi-CI_fGsHJ72O49dM1IzXDCPNuPf3OfEb21w5hkGdV8ny_2u2pKo6yBgMbPCdAF-ti1uomfp3mWcB_K9M8PitpDMkg./x-Mad-VYWQz_lpphY5LN_fnkid_zqmI-i5AYJgziAl93kYhdvtlwVijRDmSGIifl-ouZki2eTWit7zi38raKiYkKtPqKSWftIfwFqIHD0bXua4z_LcrHQOnKwCWSNp0kJKcowVQSza8XJ88-TWJfA. This is what I've captured from http headers:

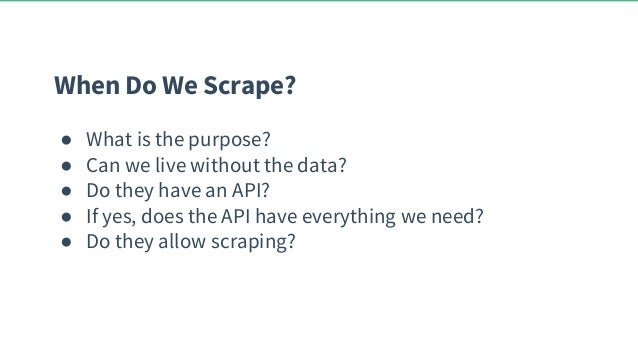

The only condition is: No proxy/VPN usage. I would like to know what methodology I should implement to avoid that recaptcha and still redirecting urls without problems. With the current site I'm scraping though, when I'm consecutively requesting 30 of their URLs, the server identifies my connection as "unusual traffic" and a Google's recaptcha appears:

I normally develop web-scrapers for private usage (I mean with no economical expectations) and for one reason: it saves me a lot of time each day. For ethical reasons I would like to remark that the content of the website mentioned here is completelly offered for free, not registration needed and I'm not breaking any rule of they neither any law.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed